The landscape of digital creativity is shifting at an unprecedented pace. In just a few years, we have moved from basic image filters to sophisticated generative systems capable of drafting entire user interfaces, cinematic videos, and complex illustrations from a single line of text. However, this rapid growth creates a major challenge for professionals: with dozens of new models launching every month, how can you identify the one that delivers the highest quality without a reliable AI design benchmark?

Traditional technical metrics often fail to capture the nuance of “good design.” A model might be mathematically efficient but produce visuals that lack aesthetic harmony or functional logic. This creates a vacuum for a reliable, human-centric AI design benchmark that can separate the hype from the actual utility.

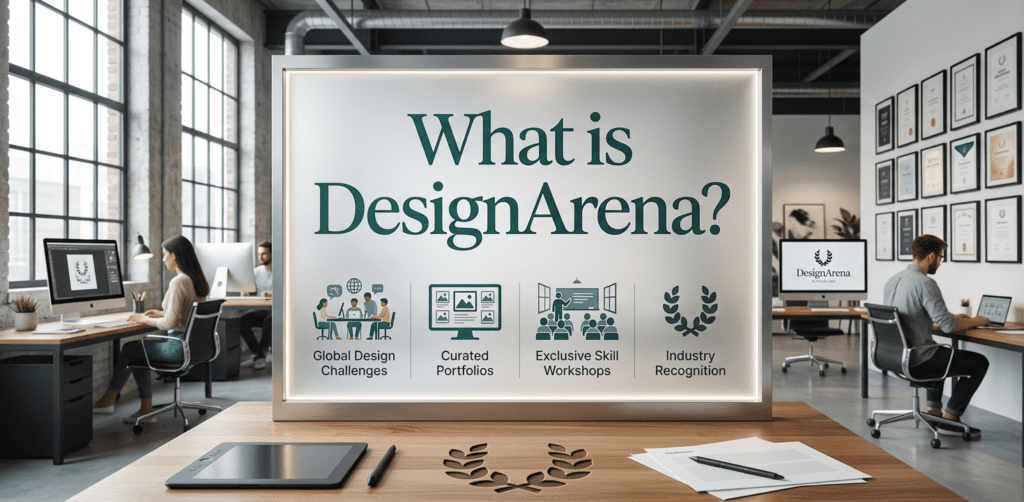

Enter DesignArena—a platform designed to bring transparency, data-driven rankings, and human intuition to the world of generative AI.

What is DesignArena?

DesignArena is a specialized AI design benchmark platform that functions as a competitive testing ground for generative models. Unlike static benchmarks that rely on automated scripts, DesignArena leverages the collective intelligence of the design community to evaluate visual outputs.

It provides a structured environment where different AI engines go head-to-head to solve the same creative challenges.

At its core, DesignArena is an AI model comparison platform that prioritizes visual quality assessment. It doesn’t just tell you that a model is fast; it shows you whether its output is actually better than its competitors.

By presenting results side-by-side and allowing users to vote on their preferences, the platform creates a living, breathing generative AI leaderboard that reflects real-world design standards.

Whether you are looking for an AI-generated UI comparison or high-fidelity image evaluation, DesignArena provides the infrastructure to see which models are leading the industry and which ones are falling behind. It transforms subjective “taste” into objective, actionable data through large-scale participation.

The Mechanics: How This AI Design Benchmark Operates

Understanding how DesignArena works is crucial to appreciating the validity of its rankings. The platform utilizes a rigorous methodology to ensure that every comparison is fair and that the results are statistically significant. You can think of it as a continuous, high-stakes tournament for algorithms.

Step 1: Uniform Prompting

To maintain a level playing field, DesignArena issues the exact same prompt to multiple AI models simultaneously. This ensures that no model has an unfair advantage due to “prompt engineering.” If the prompt is “A minimalist dashboard for a fintech app,” every model involved in that round must interpret and execute that specific brief.

Step 2: Anonymous Generation

One of the most critical features of the platform is its commitment to blind A/B testing for AI models. When you are presented with two or more designs, you have no idea which model produced which image.

This removes brand bias—preventing users from voting for a well-known name like “Midjourney” or “DALL-E” simply because they recognize the brand. You are forced to vote based solely on the visual quality of the output.

Step 3: Crowdsourced Voting

DesignArena relies on crowdsourced design evaluation. As a user, you are shown side-by-side AI design testing results. You evaluate the composition, color theory, typography, and relevance to the prompt.

Once you select the winner, your vote is recorded and integrated into the broader dataset. This democratic approach ensures that the benchmark reflects a diverse range of professional and aesthetic perspectives.

Step 4: The Elo Rating System

The platform uses a sophisticated Elo rating for AI design, similar to the systems used in competitive chess or video games. When a lower-ranked model “beats” a top-tier model in a head-to-head vote, it gains more points, while the top-tier model loses more.

This system creates a dynamic and highly accurate generative AI leaderboard that continuously evolves as new models enter the arena and developers refine older ones.

Key Features of DesignArena

DesignArena isn’t just a simple voting site; it is a comprehensive visual quality assessment tool. Here are the features that make it a cornerstone for the modern AI ecosystem:

- Head-to-Head AI Comparisons: Directly compare the creative logic of two different engines under identical conditions.

- Unbiased Evaluation Environment: By stripping away labels, the platform ensures that only the best pixels win.

- Multi-Modal Support: DesignArena isn’t limited to just images. It covers UI components, full web layouts, iconography, and is expanding into video and 3D assets.

- Real-Time Leaderboard: Watch the rankings shift as the community interacts with new model releases in real-time.

- Deep-Dive Analytics: For power users, the platform offers insights into which models excel at specific styles, such as “photorealism” vs. “vector illustration.”

📊 SEO + UX boost

| Feature | Description | Benefit for Users |

|---|---|---|

| Head-to-Head Comparison | AI models compete using the same prompt | Fair and direct performance comparison |

| Blind A/B Testing | Outputs shown without revealing model names | Eliminates bias in voting |

| Crowdsourced Voting | Users vote on best design | Real human feedback |

| Elo Rating System | Dynamic ranking based on wins/losses | Accurate and constantly updated ranking |

| Generative AI Leaderboard | Public ranking of AI models | Easy identification of top performers |

| Multi-Modal Support | Supports UI, images, and more | Versatile testing across use cases |

| Visual Quality Assessment | Focus on aesthetics and usability | Better real-world decision making |

Why DesignArena is Essential for the Industry

Why do we need a dedicated AI design benchmark? The answer lies in the limitations of traditional software testing. In coding or math, an answer is either right or wrong. In design, an answer must be “effective,” “appealing,” and “functional.” Automated systems cannot yet feel the emotional impact of a color palette or the intuitive flow of a UI layout.

DesignArena fills this gap by quantifying “design taste.” It provides a bridge between raw computational power and human aesthetic standards. For developers, it provides a feedback loop to improve their models. For businesses, it serves as a procurement guide, helping them decide which API to integrate into their products based on proven performance rather than marketing promises.

By using side-by-side AI design testing, the platform highlights the nuances that technical whitepapers often miss. It exposes “hallucinations” in UI design, such as nonsensical text or impossible button placements, which a human eye catches instantly but a computer might overlook.

Who Should Use DesignArena?

DesignArena is designed for a broad spectrum of professionals who are either building with AI or using AI to enhance their creative workflows.

UI/UX Designers

If you are looking for an AI-generated UI comparison, this platform is your best resource. You can see which models understand grid systems, hierarchy, and modern design trends, helping you pick the right tool for wireframing and prototyping.

Content Creators and Marketers

Marketers need consistent, high-quality visuals. DesignArena allows you to see which models produce the most brand-ready assets, saving you hours of trial and error with different tools.

AI Researchers and Enthusiasts

For those interested in the “why” behind AI performance, the generative AI leaderboard offers a fascinating look at how different architectures handle creative tasks. It is the ultimate playground for seeing how the latest updates from OpenAI, Anthropic, or Google stack up against open-source giants like Stable Diffusion.

Pros and Cons: A Balanced View

While DesignArena is a revolutionary AI design benchmark, it is important to understand its strengths and its limitations to use the data effectively.

Pros

- Real Human Feedback: Captures the “soul” of design that algorithms miss.

- Fair Comparisons: Blind testing eliminates brand name bias.

- Accessibility: The interface is intuitive, allowing anyone to contribute to the global benchmark.

- Up-to-Date: New models are added almost as soon as they are released.

Cons

- Subjectivity: Even with professional voters, design is inherently subjective; what one person loves, another may dislike.

- Community Dependency: The accuracy of the leaderboard relies on a high volume of active participants to ensure statistical validity.

Frequently Asked Questions (FAQ)

1. How does DesignArena prevent voting manipulation?

The platform uses several layers of security, including account verification and pattern detection algorithms, to ensure that no single user or bot can unfairly skew the AI design benchmark results.

2. Can I use my own prompts in the arena?

DesignArena typically uses standardized prompts to ensure leaderboard consistency and regularly hosts community-driven challenges where users submit their own creative briefs.

3. Which models are currently being tested?

The platform includes all major players, including Midjourney, DALL-E 3, Stable Diffusion (various versions), Adobe Firefly, and specialized UI-focused models like Galileo or v0.

4. Is DesignArena free to use?

Yes, participating in the voting process and viewing the public generative AI leaderboard is generally free for the community to ensure the widest possible range of feedback.

5. How is the Elo score calculated specifically?

The score is calculated based on wins and losses against other models. If Model A (high rank) loses to Model B (low rank), Model B gains a significant number of points while Model A drops, reflecting the unexpected outcome.

6. Does it support UI/UX evaluation specifically?

Absolutely. One of the strongest use cases for DesignArena is the AI-generated UI comparison, which focuses on layouts, component logic, and interface aesthetics.

7. How often is the leaderboard updated?

The leaderboard updates in real-time. Every time a vote is cast, the Elo ratings of the involved models are adjusted instantly.

8. What is “Blind A/B testing” in this context?

It means you see two designs (A and B) without knowing which AI tool created them. This ensures that you vote for the best design, not the most famous company.

9. Can companies use this data for their own products?

Yes, many teams use DesignArena as a visual quality assessment tool to decide which generative AI APIs are worth investing in for their specific business needs.

Conclusion: The Future of AI Evaluation

As generative AI continues to evolve, the need for a transparent, community-driven AI design benchmark will only grow. DesignArena has successfully created a space where the quality of an output matters more than the marketing budget of the company that created it.

By combining blind A/B testing for AI models with a robust Elo rating for AI design, the platform provides a level of clarity that was previously missing in the industry.

Whether you are a professional designer trying to stay ahead of the curve or an AI enthusiast curious about the latest tech, DesignArena offers the most accurate reflection of current AI capabilities. It is more than just a leaderboard; it is a movement toward a more aesthetic, functional, and human-aligned AI future.

Are you ready to see which AI truly reigns supreme? Visit DesignArena today, cast your votes, and help shape the future of the world’s most comprehensive AI design benchmark.